The Intelligent Friend - The newsletter about the AI-humans relationships, based only on scientific papers.

Hello everyone! This week I have just launched a paid version of this publication! A new weekly issue, the Nucleus, where you can find summaries of 4 papers and many other interesting things (every Wednesday) and an exclusive chat to talk about AI, exchange ideas and talk about your projects: the Society. All at a minimum on Substack, only 5 USD.

I wanted to thank you! Some people have already signed up and in general the reception has been super positive. If you want to have a look, I have published a first issue open to everyone, you can find it here. From next Wednesday, the Nucleus and the society will become exclusive for paid subscribers. For only 5 USD.

That said, let's start with today's issue (which is and will always remain free!).

Intro

Ask Alexa what time it is. To set a reminder. To buy batteries. Alexa is your voice assistant, but what is the relationship you perceive between you? "Do you 'see her' as an assistant, a companion, or is your interaction simply a mere, opportunistic exchange? Today, let us try to understand what kind of relationships we form in our minds with our voice assistants, and more importantly, what it entails.

The paper in a nutshell

Title: Servant by Default? How Humans Perceive Their Relationship With Conversational AI. Authors: Tschopp, Gieselmann, Sassenberg. Year: 2023. Journal: Journal of Social and Personal Relationships.

Main result: users perceive the relationship with conversational AI more as a master-assistant and ascambient than a companion-like one.

Using the Relational Models Theory, the authors tried to understand how humans perceive their relationship with AI, leading to an innovative approach in the study of relationships.

Conversational AI and relationships

Do you know who is one of the people who has received the most marriage requests (so far)? Alexa. According to one estimate, users ask the amazon assistant about 6000 times a day to get married. In India alone, 19000 times a day people say 'I love you' to Alexa1.

Alexa is present in many houses. Or Google Home, or another form of what is called Voice Conversation Agents (VCAs). But the really interesting fact is not in their commercial spread, but in the relationships we build with them. As you know, we have already talked in this specific issue about the possibility of feeling 'passion' or 'love' for virtual assistants.

Those mentioned are all devices that belong to the broader field of Conversational AI: a technology that enables chatbots and virtual assistants to interact with humans naturally through text or voice inputs and outputs, replicating human-like communication.

Now, we use Alexa every day and for a wide variety of tasks. However, the relationship we build with this tool varies greatly from person to person. For some it can be a friend, a lover or simply an assistant. In short, what are the most common ways in which people perceive their interactions with VCAs? To answer this, scholars applied a renowned model by Fiske (1992)2, called Relationship Models Theory (RMT), used to study human relationships.

Relationship Models Theory (RMT)

Now, to talk about it, imagine these 4 types of people: 1) your dad; 2) a group of colleagues or ex-colleagues; 3) your roommate and 4) your mates from a sports team (whatever sport you prefer!).

Even if you just put these four subjects together (subject can also be a group of people), you might get a strange feeling. Your dad, as friendly and sweet as he may be, is still - or was - a person in authority, who had to protect you and tell you what was OK and what was not (in different ways, but more or less true for all dads). Your colleagues were people who were mainly focused on achieving the outcome of the project or presentation. With your teammates in football, ballet or cricket, you felt as one, ready to challenge anyone out there. Finally, your roommate is or was a person with whom you more or less formed a close relationship.

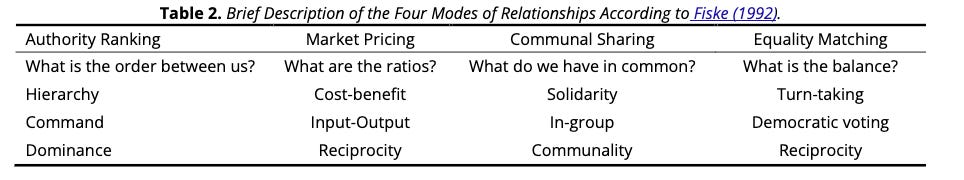

Well, these four types represent none other than the 'Four Modes of Relationships' according to Fiske. That is, the four dimensions on which humans perceive relationships with other humans:

Authority Ranking: a hierarchical relationship where individuals are ranked, with higher ranks holding command and responsibility to protect those below them (your dad);

Market Pricing: focuses on rational cost-benefit analysis, where interactions are transactional and rewards such as money are expected in return for contributions, (work environments);

Communal Sharing: found in closely-knit groups like tribes, where individuals treat each other as equals and emphasize shared commonalities (sport teams).

Equality Matching: characterizes relationships where reciprocity is key, maintaining an egalitarian balance, often seen in peer-to-peer interactions where exchanges are direct and equivalent (your roommate).

Of course, these modes do not necessarily act separately, but also simultaneously.

Rational or emotional?

Now, these are ways we evaluate our relationships with humans. What happens when we turn to AI instead? This was the basis for the scholars' hypotheses. Let's think about that for a second. When you interact with Alexa, you might feel a little like the boss giving orders to her assistant. It is no coincidence that we often talk about assisting people in daily tasks with these technologies. At the same time, however, despite the aspects that are more similar to human relationships, you use Alexa above all because you need it. Because it is useful to you. Because it amuses you or helps you. In short, you want to get something from her.

Hence scholars hypothesize that authority ranking and marketing pricing have a much greater weight in human-AI relations than the other two "modes".

But the hypotheses don't stop there. Because these "modes" actually interact with a series of more or less rational or emotional factors. This awareness is widespread in the literature: many studies follow a dichotomy that distinguishes between rational and emotional aspects34.

Alexa is still a technological tool. And as such, and above all we as consumers, cannot help but evaluate its capabilities. Hu et al. (2022)5 have, for example, already demonstrated that authority ranking is closely linked to this competence assessment.

But the perception of the "system" (Alexa) goes even further. When I talk about the importance of the relational aspects of AI I am not talking about them only because they have an impact for the user, but also for the user as a consumer.

Those who categorized AI systems as friends, for example, reported higher levels of trust and less "social distance"6. This has a great impact for the companies producing these systems and also for the consequences in terms of use. Of course, in these relationships factors such as communal relationship mode will be much more frequent.

In fact, the evidence suggests that those who have an emotional relationship with a machine actually assume that it is characterized by feelings similar to those of human friends, applying constructs that are specific to human interactions7.

For this reason, the authors hypothesize that equality matching and communal sharing are positively linked to the emotional dimensions of perceptions of the system, such as psychological distance (we also talked about it in this issue!), anthropomorphism, and trust.

So, how do we perceive the relationship?

In two studies, the authors asked participants about their perceptions of technology, with the first study also indicating a specific conversion AI (63%) chose Alexa. The second study was more focused on slightly different measures from the first, always aimed at understanding through users' responses how they identified their relationship with AI. The results were not without surprises.

First of all, the emotional relationship with AI containing factors of the communal and equity matching modes proved to be significant, but less distinct: in both studies these two factors "merged" into a single dimension that the authors defined as "peer bonding".

Participants in the study typically viewed their interactions with conversational AI through the lenses of authority ranking and market pricing, indicating a structured and transactional nature to these relationships. Emotional connection and peer-like bonds were notably weaker compared to these more structured interactions.

This is certainly a very important result: when you think about your relationship with AI you are likely to characterize it as similar to one with the human, but in depth your perceptions lack those purely human elements. So, there is still a profound distance in terms of perceptions.

While authority ranking did not show strong correlations with specific system perceptions or user characteristics, the market pricing model showed correlations with both rational (e.g., competence concerns) and emotional (e.g., sense of inclusion with the AI) variables. Therefore, perhaps even more interesting, these methods do not see clear distinctions between more emotional and/or relational aspects in relation to particular "modes": even those methods that we think of as more purely evaluative and analytical (such as market pricing) can actually hide nuances emotional, bringing greater complexity to the analysis of human-AI relationships.

In summary, people see AI as both a tool to be evaluated based on its usefulness (authority ranking) and a companion with whom to develop an emotional bond (market pricing). These two perspectives are not exclusive and can coexist in the same relationship with AI.

Finally, even though peer bonding (feeling like equals with the AI) was the least common way people described the relationship, it was the strongest predictor of how close they felt to the AI, how human-like they perceived it, and how friendly they thought it was.

Before moving on to the research questions, I remind you that you can subscribe to Nucleus, the exclusive weekly section in which I send 4 paper summaries, links to resources and interesting readings, and interview the authors. It comes out every Wednesday.

In the last issue (open to everyone), for example, we talked about the political opinions of ChatGPT, a new environment for human-robot interactions and interesting links such as the similarity between “the child you” and the “current you”.

Just this? No: you can also access the Society, the exclusive chat where you can discuss AI and talk about your projects, as well as find your next co-founder or collaborator. Subscribe now.

Take-aways

Authority: common but not so powerful. The study suggests people see conversational AI primarily as assistants, judging their value on usefulness: this is the “authority ranking” mode. Surprisingly, it doesn't strongly influence how people perceive the AI itself.

A rational and emotional exchange. Another dominant mode in relation to Fiske's model (used as a basis by scholars) has been "market pricing", in which we evaluate the benefits we obtain from a relationship. However, despite its analytical nature, strong correlations are also highlighted with more emotional as well as rational aspects.

Alexa, we are friends less frequently. A new dimension emerges, of "peer bonging", which unites those two main emotional aspects of the reference model. Despite its lower frequency, it has been particularly connected with various measures.

Further research directions

Examine the highlighted relationships through a longitudinal approach, which can also highlight more complex nuances;

Technical, voice and/or characteristic changes in AI could further influence perceptions of the relationship, and could provide very promising avenues of research for scholars;

In addition to investigating user opinions, one could also investigate the user's behavior in concrete terms, or see the influence on some outputs (I would suggest, for example, on the intention to use it more frequently or on loyalty).

Thank you for reading this issue of The Intelligent Friend and/or for subscribing. The relationships between humans and AI are a crucial topic and I am glad to be able to talk about it having you as a reader.

Has a friend of yours sent you this newsletter or are you not subscribed yet? You can subscribe here.

Surprise someone who deserves a gift or who you think would be interested in this newsletter. Share this post to your friend or colleague.

P.S. If you haven't already done so, in this questionnaire you can tell me a little about yourself and the wonderful things you do!

Amazon. (2021, February 2021). Customers in India say “I love you” to Alexa 19,000 times a day. Amazon. https://www.aboutamazon.in/news/devices/customers-in-india-say-i-love-you-to-alexa-19-000-times-a-day

Fiske, A. P. (1992). The four elementary forms of sociality: framework for a unified theory of social relations. Psychological review, 99(4), 689.

Glikson, E., & Woolley, A. W. (2020). Human trust in artificial intelligence: Review of empirical research. Academy of Management Annals, 14(2), 627-660.

Malle, B. F., & Ullman, D. (2021). A multidimensional conception and measure of human-robot trust. In Trust in human-robot interaction (pp. 3-25). Academic Press.

Hu, P., Lu, Y., & Wang, B. (2022). Experiencing power over AI: The fit effect of perceived power and desire for power on consumers' choice for voice shopping. Computers in Human Behavior, 128, 107091.

Pitardi, V., & Marriott, H. R. (2021). Alexa, she's not human but… Unveiling the drivers of consumers' trust in voice‐based artificial intelligence. Psychology & Marketing, 38(4), 626-642.

Han, S., & Yang, H. (2018). Understanding adoption of intelligent personal assistants: A parasocial relationship perspective. Industrial Management & Data Systems, 118(3), 618-636.