The Intelligent Friend - The newsletter about the AI-humans relationships, based only on scientific papers.

Intro

It is no mystery that we attribute human characteristics to inanimate objects (phenomenon called “anthropomorphism”). Even less is the presence of this dynamic related to robots, virtual assistants and chatbots. Many scholars have tried to understand the effect of this process. However, little research has tried to understand how our mindset actually influences our responses. Today's paper has accepted the challenge.

The paper in a nutshell

Title: Partners or Opponents? How Mindset Shapes Consumers’ Attitude Toward Anthropomorphic Artificial Intelligence Service Robots. Authors: Kim et al. Year: 2023. Journal: Journal of Service Research.

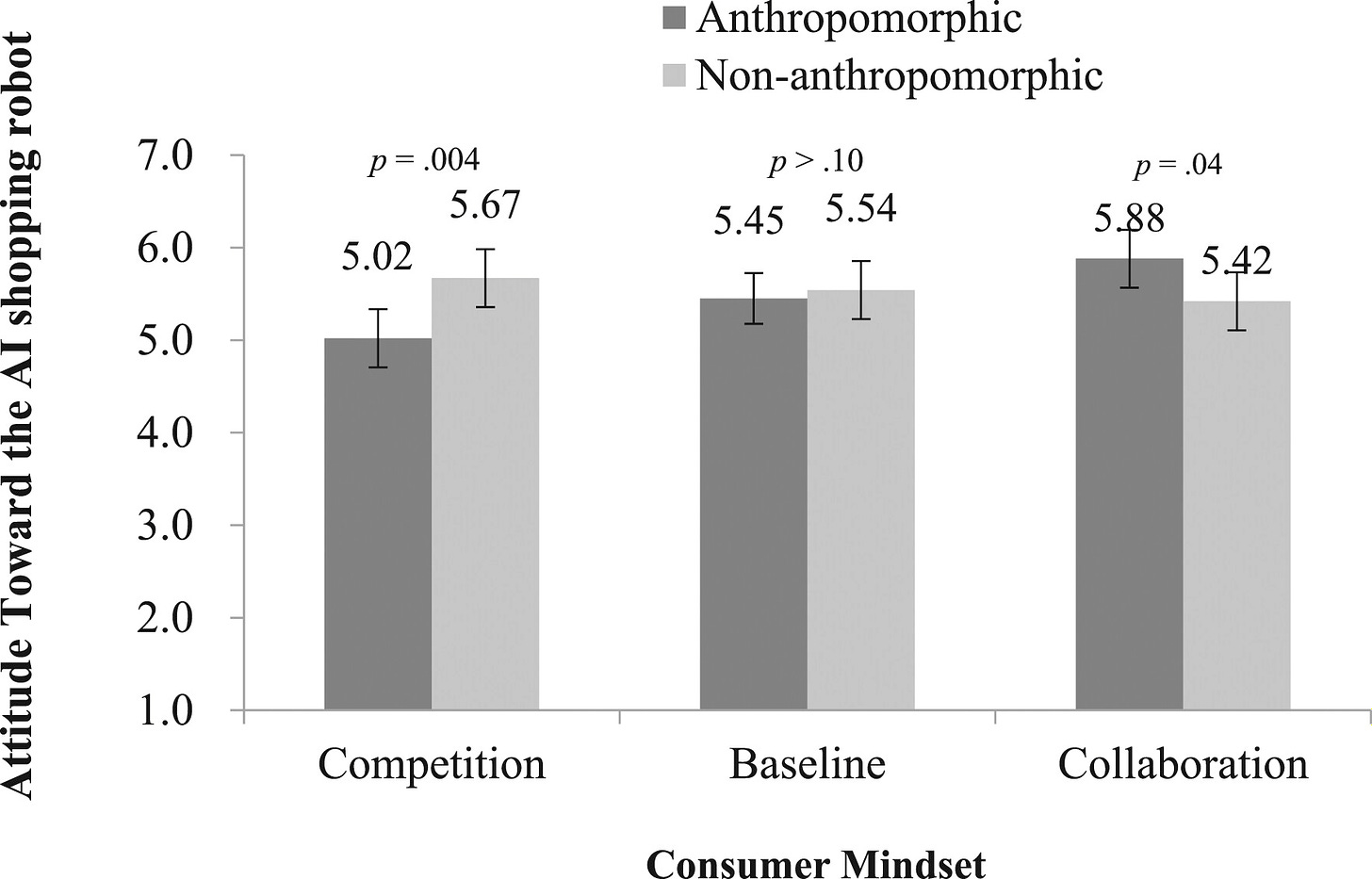

Main result: competitive mindset consumers respond less favorably to anthropomorphic (vs. non-anthropomorphic) AI robots, whereas collaborative mindset consumers respond more favorably to anthropomorphic (vs. non-anthropomorphic) AI robots.

In the context of service robots the study not only investigates the role of our mindset in the relationship with AI, but also that of the perceived psychological closeness with the robot.

Anthropomorphism and our reactions

Consider the times you've talked to technology as if it were a friend, apologized to ChatGPT for the tasks you've asked of it, or viewed your PC as a daily travel companion. These are instances where we attribute human traits to technologies lacking them; in other words, we are anthropomorphizing.

Anthropomorphism involves ascribing human traits, intentions, or emotions to non-human entities or objects1.

Numerous AI creators intentionally incorporate anthropomorphic traits into their robots' hardware, like human-like forms, and software, such as showing emotions or mimicking human voices2. Considering the varying degrees to which AI agents mimic human traits, a crucial inquiry centers on the impact these humanlike attributes have on their interactions with users3.

The results on the effects of anthropomorphism have been very divergent over time. The authors have constructed a very interesting table summarizing the main results (you find it in the study). In a very brief summary, some scholars revealed mainly positive aspects4 5 6, others mainly negative ones7 8.

Among the former, I found particularly intriguing the work of Kang and Kim (2020)9, which shows how, in the context of IoT (Internet of Things) anthropomorphism improves users' sense of connection with their devices and offers a practical way to ease tensions between the user and the device's agency.

The concept of agency might seem strange to grasp, but it is not. Think of any situation where you find yourself using a smart device, such as an Alexa-controlled light bulb or an innovative coffee machine.

Users have the ability to customize smart devices to their liking or to let the devices autonomously adapt their functions based on the data collected, creating a personalised experience. For example, using apps such as If This Then That, users can connect their smartphones to control smart devices, thus demonstrating user autonomy. Conversely, devices such as smart bulbs, which adjust settings automatically, demonstrate that the device is autonomous. This dual ability to initiate and adapt in human-IoT interactions underlines the agency present in both users and devices.

Kang and Kim have found that users feel more positive about their interactions when they control the devices themselves, rather than the devices acting independently. Moreover, when devices do act on their own, attributing human qualities to them enhances user satisfaction by fostering a greater sense of connection.

Now, as you can imagine and you have seen in these studies, anthropomorphism is particularly important in a relationship perspective. In the relationship with AI, however, research has often focused on the characteristics of the chatbot, often leaving out (at least in the field of services) any in-depth study of how the characteristics of the person influenced the results of the interaction. For instance, if we pose positively towards a person, it is likely that what will happen will be different from our hyper competitive or grumpy approach. Here, we can do the same reasoning with machines.

Competitive and collaborative mindset

Recognizing whether someone has a competitive or collaborative mindset isn't usually difficult for us, and we often notice the impact of these attitudes.

Research indicates that competitive individuals prefer working alone, with goals that may conflict with others10, while collaborative individuals enjoy teamwork and contributing to shared objectives11.

Studies suggest our behavior towards anthropomorphic AI mirrors our interactions with humans. This brought authors of the today’s study to propose that our mindset might shape our responses to AI, affecting how we react to technology that resembles humans more or less closely.

Indeed, the scholars found these intriguing results:

Competitive consumers showed less positive reactions towards anthropomorphic AIs than non-anthropomorphic ones, while collaborative consumers showed a preference for anthropomorphic AIs.

In a neutral scenario, attitudes towards both types of AI were similar.

Furthermore, in the context of robot anthropomorphism, perceptions of the anthropomorphic robot varied significantly among different consumer mindsets.

Basically, if you are a person with a competitive mindset, it is very likely that you will not react well to a robot helping you order something, do your shopping or pay your bills. If you are a collaborative person instead, it is likely that you will.

This result, however intriguing, does not explain one thing: why do people with this mindset behave the way they do? What makes them averse to an anthropomorphised robot but not to one that is not?

Psychological Closeness

"I feel you close”.

How many times will it have happened to us to say such a phrase. Psychologically sensing someone close is not only important in everyday relationships, but has a great impact on consumers and all the services we rely on. According to psychological research, we can also perceive 'closeness' simply by sharing the same name12 or the same birthday as someone else13 14. Or when we are asked to take someone's perspective15. The construct of 'psychological closeness' encapsulates all this.

Psychological closeness is described as feelings of attachment and connection to others16.

Feeling someone psychologically close can be very powerful, so much so that we can distinguish between those who form a positive attitude towards something and those who have an opposite reaction. However, not all people are the same. There are people who tend to feel more psychologically close to someone. There are others who, being by nature not so sociable or simply open, may not feel the relevance of this dynamic. And if this is true for human persons, the authors hypothesize it is also true for machines.

Consumers with a collaborative mindset, who naturally feel a connection to others, may experience increased feelings of closeness to anthropomorphic robots, leading to more favorable views. However, this effect may diminish if the robots seem psychologically distant. Competitive mindset consumers, feeling less connected to people, may see anthropomorphic robots as less psychologically close, resulting in less positive attitudes towards them.

This suggests an interaction between the consumer's mindset and their reactions to robot anthropomorphism: feeling psychologically close to the anthropomorphized robot is the reason why a person with a different mindset reacts differently.

The authors' results confirm their hypothesis:

Competitive mindset consumers showed a preference for non-anthropomorphic over anthropomorphic AI robots, a sentiment not shared by those with a collaborative mindset, who favored anthropomorphic robots.

This divergence stems from competitive consumers feeling less connected to anthropomorphic robots, leading to less positive attitudes, while collaborative consumers felt a stronger connection to these robots, resulting in more favorable views.

In summary, the authors demonstrate something really interesting and with many practical applications: when encountering an anthropomorphic robot, consumers see it as a social being and apply social norms to their interactions.

Competitive consumers, compared to collaborative consumers, may feel less connected to the robot, eliciting negative responses. In contrast, with non-anthropomorphic robots, which are viewed as not social entities, the consumer's competitive or collaborative mindset makes no difference in their reactions.

Take-aways

Divergence on anthropomorphism: research on the effects of anthropomorphism varies, with no clear positive or negative impact on user attitudes. Today's study focuses on the service context.

Competitive vs collaborative: competitive consumers show less positive attitudes towards anthropomorphic robots compared to non-anthropomorphic ones, while collaborative consumers show more favorable responses

The role of psychological closeness: this factor explain the competitive-collaborative different effects, with competitive mindsets leading to decreased closeness and collaborative mindsets to increased closeness to the robot.

Further research directions

This study applies psychological insights from human relationships to anthropomorphic robots, suggesting these dynamics might also relate to interactions with human employees. It raises the question of whether the effects noted with AI robots would similarly apply or be more pronounced with human counterparts.

The research hints at various marketing strategies that could encourage cooperation or competition, such as leveraging social media contests to engage followers, that could be further explored.

It's noted that cooperation and competition vary across cultures, presenting an avenue for future research to explore how cultural contexts influence consumer reactions to anthropomorphic robots and whether other cultural factors could impact these interactions.

Thank you for reading this issue of The Intelligent Friend and/or for subscribing. The relationships between humans and AI are a crucial topic and I am very happy to be able to talk about it having you as a reader.

Has a friend of yours sent you this newsletter or are you not subscribed yet? You can subscribe here.

Surprise someone who deserves a gift or who you think would be interested in this newsletter. Share this post to your friend or colleague.

P.S. If you haven't already done so, in this questionnaire you can tell me a little about yourself and the wonderful things you do!

Epley Nicholas, Waytz Adam, Cacioppo John T. (2007), “On Seeing Human: A Three-Factor Theory of Anthropomorphism,” Psychological Review, 114 (4), 864-86.

Qiu Lingyun, Benbasat Izak (2009), “Evaluating Anthropomorphic Product Recommendation Agents: A Social Relationship Perspective to Designing Information Systems,” Journal of Management Information Systems, 25 (4), 145-82.

Duffy Brian R. (2003), “Anthropomorphism and the Social Robot,” Robotics and Autonomous Systems, 42 (3-4), 177-90.

Broadbent Elizabeth, Kumar Vinayak, Li Xingyan, Sollers John, Stafford Rebecca Q., Macdonald Bruce A., Wegner Daniel M. (2013), “Robots with Display Screens: A Robot with a More Humanlike Face Display Is Perceived to Have More Mind and a Better Personality,” PLoS ONE, 8 (8), e72589.

Van Pinxteren Michelle M. E., Wetzels Ruud W. H., Rüger Jessica, Pluymaekers Mark, Wetzels Martin (2019), “Trust in Humanoid Robots: Implications for Services Marketing,” Journal of Services Marketing, 33 (4), 507-18.

Waytz Adam, Heafner Joy, Epley Nicholas (2014), “The Mind in the Machine: Anthropomorphism Increases Trust in an Autonomous Vehicle,” Journal of Experimental Social Psychology, 52 (May), 113-17.

Ackerman Evan (2016), “Study: Nobody Wants Social Robots that Look like Humans Because They Threaten Our Identity,” IEEE Spectrum, 1-5.

Kim Sara, Chen Rocky Peng, Zhang Ke (2016), “Anthropomorphized Helpers Undermine Autonomy and Enjoyment in Computer Games,” Journal of Consumer Research, 43 (2), 282-302.

Kang Hyunjin, Kim Ki Joon (2020), “Feeling Connected to Smart Objects? A Moderated Mediation Model of Locus of Agency, Anthropomorphism, and Sense of Connectedness,” International Journal of Human-Computer Studies, 133, 45-55.

Stapel Diederik A., Koomen Willem (2005), “Competition, Cooperation, and the Effects of Others on Me,” Journal of Personality and Social Psychology, 88 (6), 1029-38.

Tauer John M., Harackiewicz Judith M. (2004), “The Effects of Cooperation and Competition on Intrinsic Motivation and Performance,” Journal of Personality and Social Psychology, 86 (6), 849-61.

Pelham, B. W., Carvallo, M., & Jones, J. T. (2005). Implicit egotism. Current Directions in Psychological Science, 14(2), 106-110.

Cialdini, R. B., & De Nicholas, M. E. (1989). Self-presentation by association. Journal of personality and social psychology, 57(4), 626.

Finch, J. F., & Cialdini, R. B. (1989). Another indirect tactic of (self-) image management: Boosting. Personality and Social Psychology Bulletin, 15(2), 222-232.

Gunia, B. C., Sivanathan, N., & Galinsky, A. D. (2009). Vicarious entrapment: Your sunk costs, my escalation of commitment. Journal of Experimental Social Psychology, 45(6), 1238-1244.

Gino, F., & Galinsky, A. D. (2010, June). When Psychological Closeness Creates Distance from One’s Moral Compass. In IACM 23rd Annual Conference Paper.

Cover Credits: New Yorker

Hi Daniel! First of all, thank you for your comment.

I specifically reviewed the bibliography and the papers citing the study to answer you, and there are still no studies focusing on this aspect. I guess because the authors focus on how these human factors influence AI adoption, and not the other way around (at least talking about mindset). But this is an interesting avenue for future research. If you have advice or other topics you'd like to see in the next issue, please tell me! Seeing comments like yours and hearing your opinion is key to improving the newsletter every day.

P.S. I also believe we need a further collaborative society! In a recent event I attended, one of the guest speaker (director of a national research institute in Italy) was also highlighting his own possible perspective of sharing data and efforts of, for example, European countries on the topic to foster maximum collaboration and not research in silos. I would be really curious to know your opinion on this!

Thanks for this! do you wonder if there is a way to give agents anthropomorphic traits to get more competitive individuals to be more cooperative without coercion? It feels inherently manipulative, but there may be subtle ways to use conversational approaches for this (e.g., asking the user if they want to be more collaborative, etc., which gives them control; I think we would be surprised at how adept people can be at getting feedback from a robot, much more so than from a human). Not that there is anything inherently wrong with being competitive, but it feels like we need to be a more collaborative society in many ways. Please keep up the good work.